- Published on

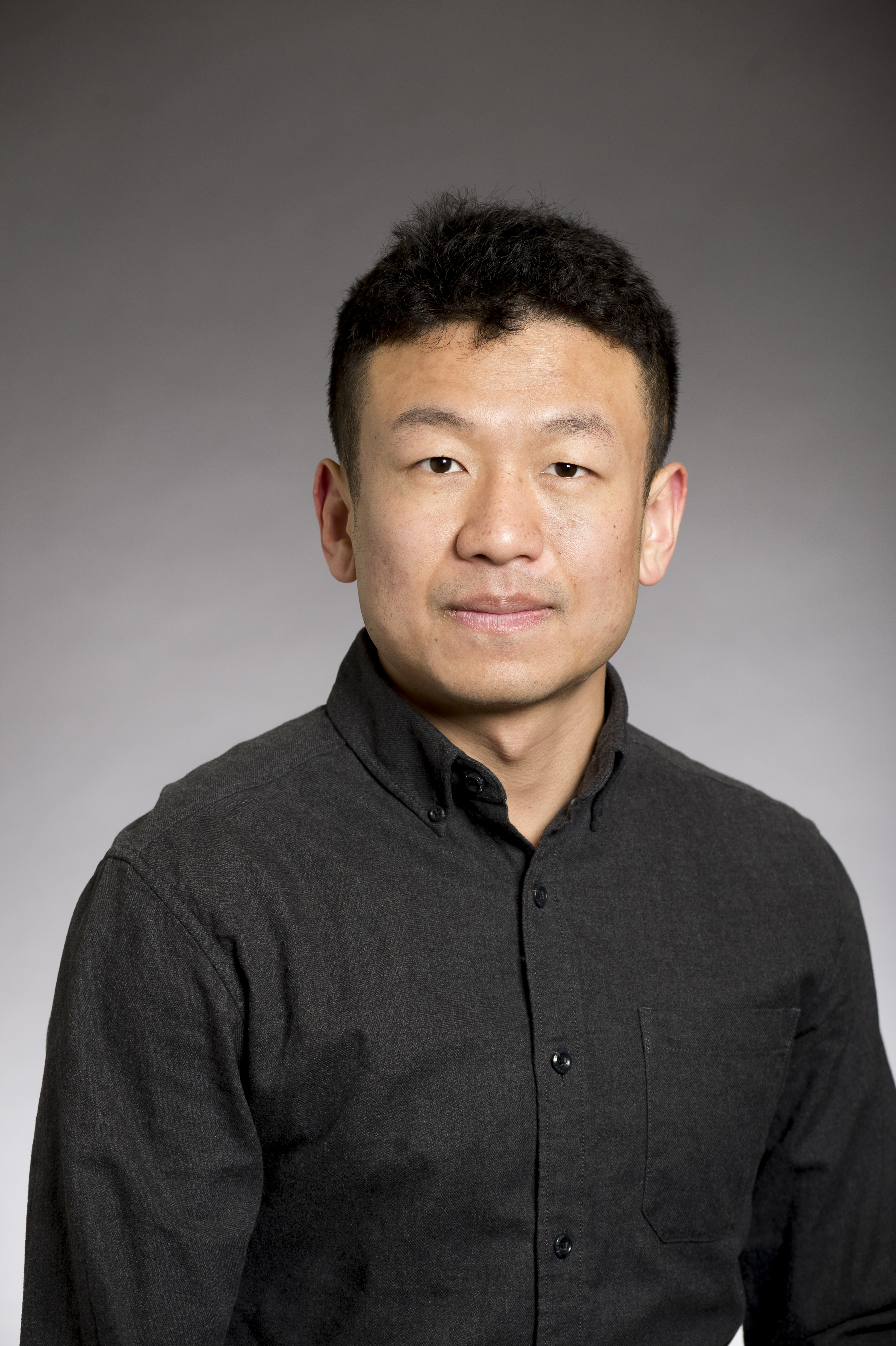

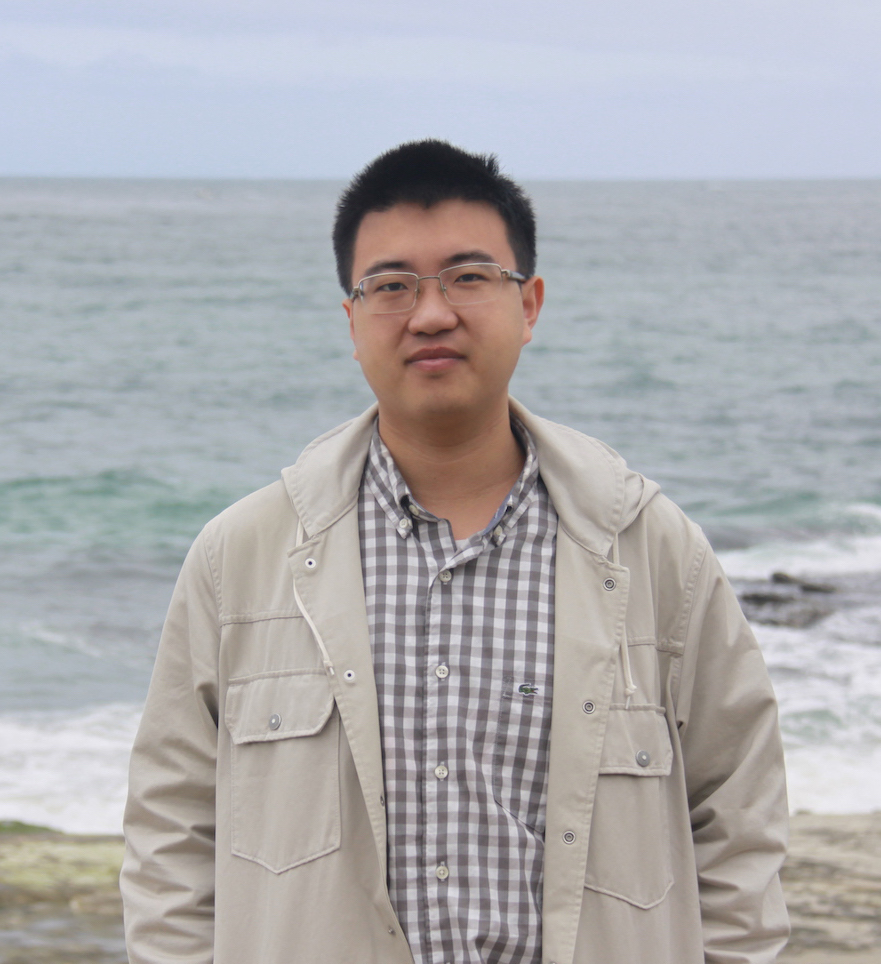

Speaker: Prof. Hongyang Zhang, University of Waterloo

This talk presents the EAGLE series, a groundbreaking approach to accelerating large language model inference without compromising output quality. Instead of traditional token-level processing, EAGLE operates at the structured feature level and incorporates sampling results to reduce uncertainty. The technology has gained significant industry adoption, with integration into major frameworks including vLLM, SGLang, TensorRT-LLM, and several others from AWS and Intel.